A Perception-driven hybrid decomposition

for multi-layer accommodative displays

1MPI Informatik 2West Pomeranian University of Technology, Szczecin 3Università della Svizzera italiana

Abstract

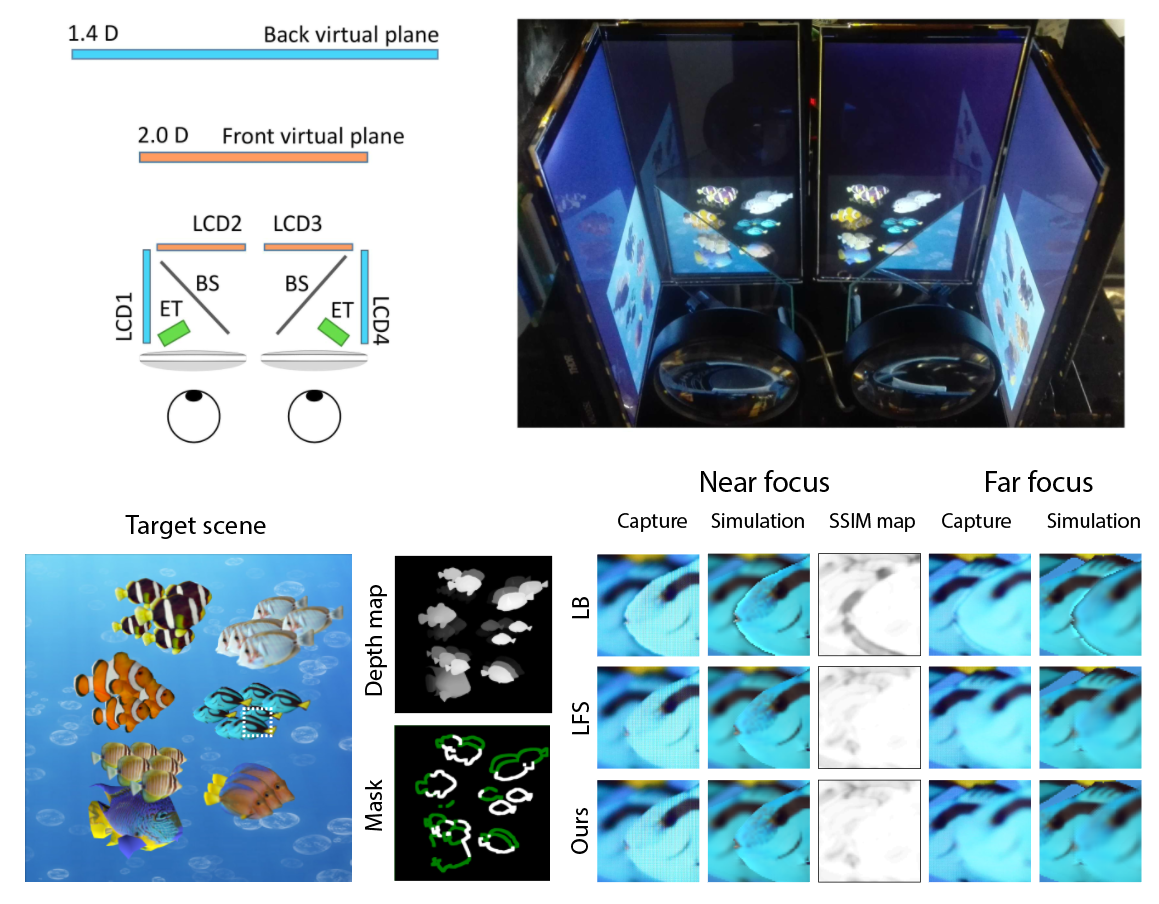

Multi-focal plane and multi-layered light-field displays are promising solutions for addressing all visual cues observed in the real world. Unfortunately, these devices usually require expensive optimizations to compute a suitable decomposition of the input light field or focal stack to drive individual display layers. Although these methods provide near-correct image reconstruction, a significant computational cost prevents real-time applications. A simple alternative is a linear blending strategy which decomposes a single 2D image using depth information. This method provides real-time performance, but it generates inaccurate results at occlusion boundaries and on glossy surfaces. This paper proposes a perception-based hybrid decomposition technique which combines the advantages of the above strategies and achieves both real-time performance and high-fidelity results. The fundamental idea is to apply expensive optimizations only in regions where it is perceptually superior, e.g., depth discontinuities at the fovea, and fall back to less costly linear blending otherwise. We present a complete, perception-informed analysis and model that locally determine which of the two strategies should be applied. The prediction is later utilized by our new synthesis method which performs the image decomposition. The results are analyzed and validated in user experiments on a custom multi-plane display.

Materials

- Preprint (PDF, 29.6 MB)

- Supplementary material (PDF, 95.4 KB)

- Supplementary video (MP4, 130 MB)

Citation

Hyeonseung Yu, Mojtaba Bemana, Marek Wernikowsi, Michał Chwesiuk, Okan Tarhan Tursun, Gurprit Singh, Karol Myszkowski, Hans-Peter Seidel, Piotr DidykA Perception-driven Hybrid Decomposition for Multi-layer Accommodative Displays

IEEE Transactions on Visualization and Computer Graphics, vol. 25, no. 5, pp. 1940-1950, May 2019. (Presented in IEEE VR)

Acknowledgments

The authors would like to thank Oliver Mercier for helpful discussions. The project was supported by the Fraunhofer and Max Planck cooperation program within the German pact for research and innovation (PFI). This project has also received funding from the European Unions Horizon 2020 research and innovation programme, under the Marie Skłodowska-Curie grant agreements No 642841 (DISTRO) and from the European Research Council (ERC) (grant agreement No 804226/PERDY). The project was partially funded by the Polish National Science Centre (decision number DEC-2013/09/B/ST6/02270).